What is data augmentation in deep learning?

- Featured Post

- Technology

- FILTER_Technology

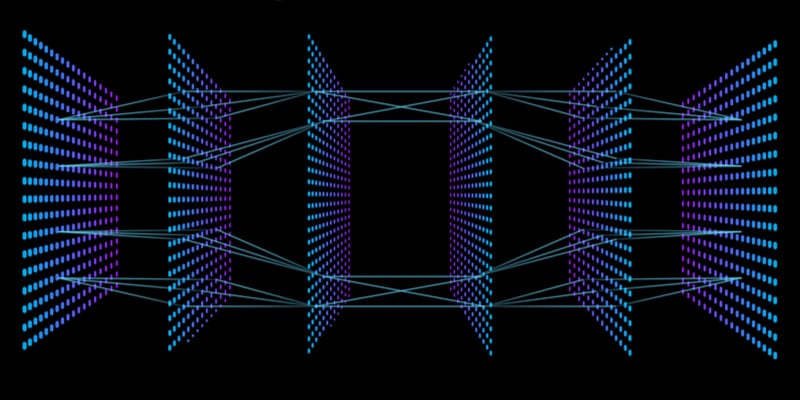

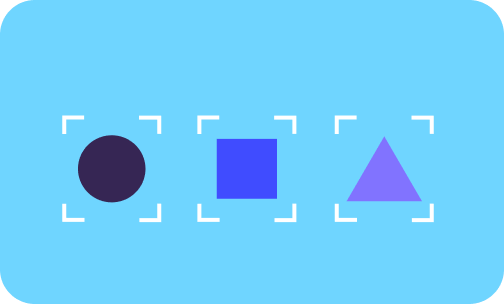

Data augmentation is the process of transforming images to create new ones, for training machine learning models. This is an important step when building datasets because modern machine learning models are very powerful; if they're given datasets that are too small, these models can start to ‘overfit’, a problem where the models just memorize the mappings between their inputs and expected outputs.

You can learn more about how a model learns from images in my previous article on data labelling.

Back to overfitting. For example, given an image of a cat in the training set with the expectation that the model outputs the label 'cat', a model that overfits will just memorize that this particular arrangement of pixels equals a cat, instead of learning general patterns (e.g. fluffy coat, small paws, whiskers, etc). A model that overfits will have very good performance on the training set but perform poorly on images that it hasn't seen before because it hasn't learned these general patterns.

Data augmentation increases the number of examples in the training set while also introducing more variety in what the model sees and learns from. Both these aspects make it more difficult for the model to simply memorize mappings while also encouraging the model to learn general patterns. While it is possible to collect more real world data, this is much more expensive and time consuming than using data augmentation techniques. So while it's always better to grow the real world dataset, data augmentation can be a good substitute when resources are constrained.

In the rest of this blog we'll talk about some:

- common data augmentation techniques

- advanced techniques

- niche techniques that are tailored and more helpful to the problems that we are working on here at Calipsa

Additionally while data augmentation is applicable for a variety of data types like images, text, and audio, we'll be focusing on data augmentation techniques for images since that's the most relevant to our product.

If you want to learn the basics of machine learning, take a look at our free ebook, "Machine Learning Explained":

Common Data Augmentation Techniques

Spatial Transformation

With spatial transformation techniques, pixels are moved around the image in set ways to create the augmented image.

Flipping

This is a very simple technique in which an image is flipped horizontally to produce a mirror image or flipped vertically to produce an image that is upside down. Generally mirror images are much more common since we are likely to see images like this in the real world as opposed to upside down images. In some domains such as cosmology or microbiology, vertical flipping is more useful since the sense of up and down aren't as hard-coded.

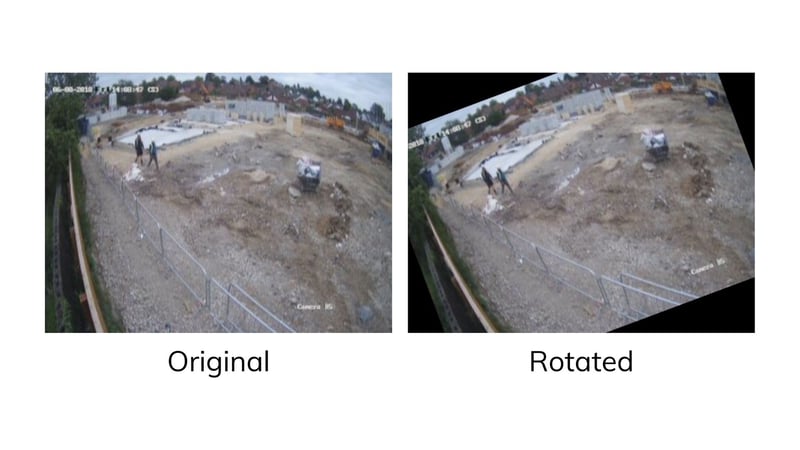

Rotation

With this technique, we rotate the entire image by a certain degree. It's important (in most domains) not to use angles that are too large since then you might end up with upside down images which are unlikely to be seen in the real world.

Translation

The entire image is shifted left/right and/or up/down by a certain amount. This will result in objects of interest appearing in different locations of the image frame after translation is applied. While some modern machine learning models like convolutional neural networks (CNNS) are built to be invariant to this type of change in the image (e.g. the model should predict a 'bird' label for an image of a bird in the sky regardless of whether the bird appears in the top left corner or bottom right corner), it doesn't hurt to still include this type of data augmentation.

Cropping

Given an image, we select part of the image (normally a square or rectangular section), take a crop of this selection, and then resize the crop to the original size of the image. By creating a new image that only features a part of the original image, the model is forced to learn how to make a decision with limited information.

Colour Transformation

With colour transformation techniques, the spatial aspect of the image is normally preserved while the values of the pixels are edited.

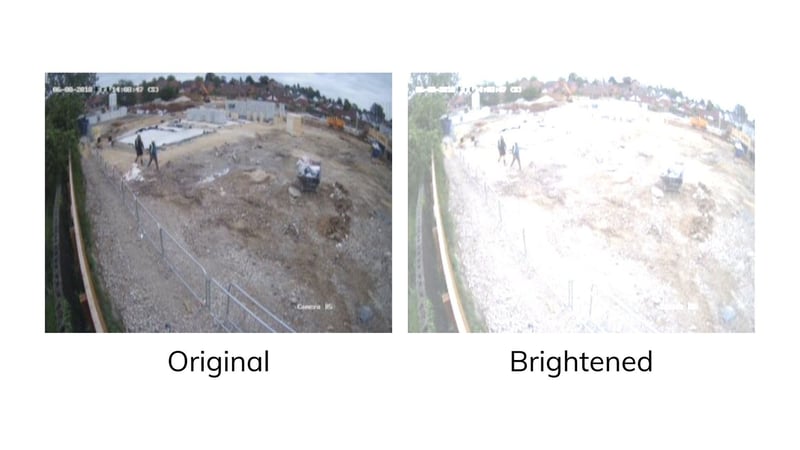

Brightness

The pixel values of the image are either increased to result in a lighter, brighter image or reduced to result in a darker, dimmer image. This is especially useful for CCTV as weather conditions or time of day can influence how bright an image is, and by using this data augmentation technique, we can help the model become more robust to these changes.

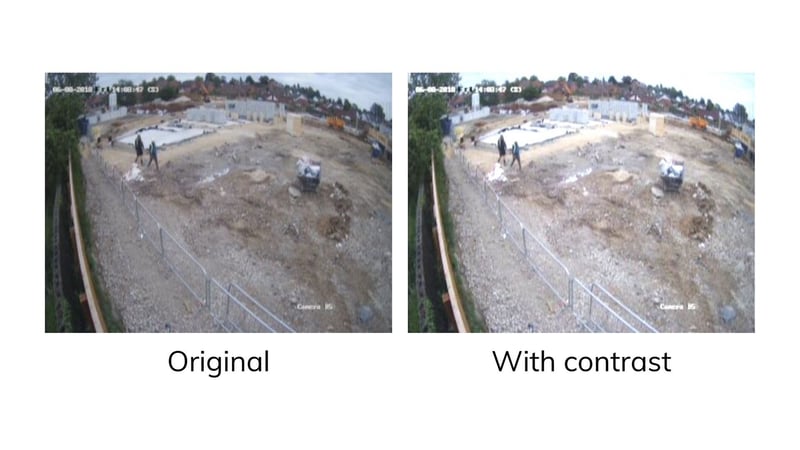

Contrast

Contrast is the difference between the bright and dark parts of an image. Increasing the contrast generally involves making the bright parts of the image brighter and the dark parts darker. This technique is useful as it can help highlight certain features in the image and make them stand out more to the model.

Advanced Data Augmentation Techniques

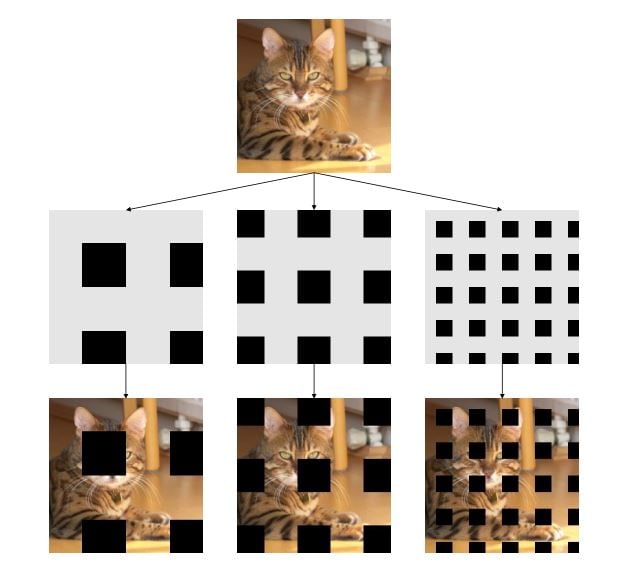

GridMask

Unlike the above techniques of spatial and colour transformations, GridMask falls under a third set of transformation techniques which we can refer to as 'information deletion'. With these techniques, parts of the image are removed by setting the pixels to 0 or placing some patch over that part of the image, thereby deleting information.

While this might seem unusual at first because real world images are unlikely to have artefacts like these, there are some similar cases. Objects in the real world images are often occluded where some of the object is blocked from view by another object. Image deletion techniques like GridMask help simulate this real world scenario.

Another useful aspect about information deletion is that it reduces a model's dependency on one particular aspect of an image. For example, imagine that we had a model that was tasked with classifying dog breeds. One way it might do this is to focus just on the ears of the dog and always make predictions based on this. However, in a real world image that the model hadn't seen before, perhaps the ears might be occluded or simply not visible due to the camera angle.

Information deletion might have helped with this as during training, there would have been some images in which the pixels containing the ears were not present. Thus the model would have been forced to branch out and rely on other features (e.g. look at the ears and nose and tail to determine the dog breed).

One of the difficulties in using information deletion techniques is picking the right amount of information to remove and the right part of the image to remove. If too little of the image is removed, then the resulting augmented image might look too similar to the original image and it would be as if we hadn't applied any augmentation at all. On the other hand, if we remove too much of the image, we might remove all the useful information and the model might not have enough to work with.

GridMask removes a grid of patches from images which they found empirically achieves a good balance between removing too little and too much information. Additionally, they also adjust the probability of removal as the model gets better. So at the start of training, no images are augmented so that the model has an easy time learning. As training progresses, the probability that an image is augmented with GridMask increases. We can view this as providing more difficult images for the model to learn from only after we've let it learn from simpler images.

AutoAugment

So far we've covered specific techniques that can be applied in isolation or combination. However there are still manual decisions that have to be made over:

- which techniques to use?

- to what degree should they be used?

- what should the order of operations be?

This is where AutoAugment comes into play. AutoAugment is an algorithm that can search over the space of these decisions and suggest good data augmentation policies. The original work uses reinforcement learning to search over 16 different data augmentation techniques to find combinations that work best for different datasets. The policies found by this algorithm were found to outperform manual policies and moreover, they found that policies that were learned from one dataset worked well with other datasets. This is important since AutoAugment can be computationally expensive to run.

By coming up with an algorithm that produces general data augmentation policies, this allows under-resourced groups to still use state of the art data augmentation policies without going over budget. Furthermore, AutoAugment is designed such that new data augmentation techniques can be integrated into the search space allowing for better policies in the future once better data augmentation techniques are discovered.

Niche Data Augmentation Techniques

We've covered some basic and advanced techniques and at Calipsa we use a mix of all of the above. However, given that we've been working on this problem for a few years, we've developed and utilized some other techniques that are more specific to our domain of false alarm filtering and CCTV video analytics.

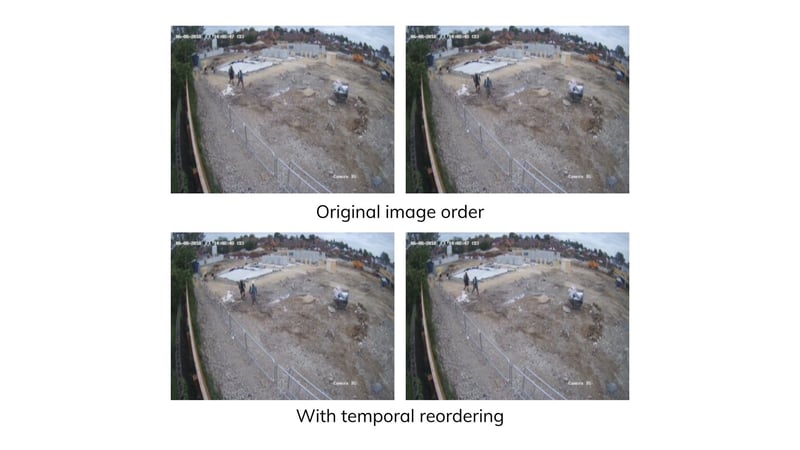

Temporal Reordering

Most of the techniques we've talked about above work well on single images. However given that we work with multiple images from a camera, we can try and incorporate other augmentation techniques specific to video data. With temporal reordering, given a pair of images, we can reverse the order of the images and present this to the model as a different training example. This is a very cheap augmentation technique to carry out and can immediately double the number of examples that the model has to work with.

Different Camera Types

When we first started false alarm filtering, most of the cameras produced images that were quite similar in terms of resolution and quality. As time progressed however, we increased our product offering to include low-resolution and thermal cameras. When this first started, we created two separate models for these cameras. However we have since been able to collect more data from low-resolution and thermal cameras and all data is now part of the same training set.

We can view this as augmenting the normal dataset with low-resolution and thermal data and we can also view this as augmenting the low-resolution dataset with normal and thermal images and the thermal dataset with low-resolution and normal images. We've found that putting all the images in the same set and applying data augmentation on top of this has led to much better results and also allowed us to deal with just one model instead of three which simplifies development and maintenance.

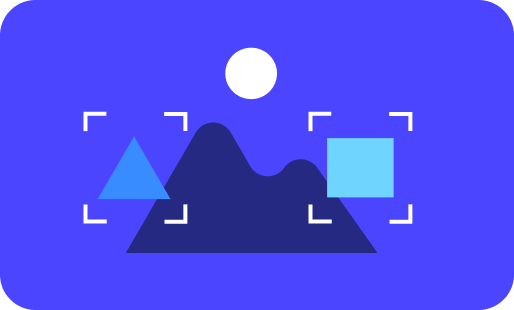

Background Augmentation

When working on our visual search offerings, we found that the model we were training would sometimes label different people with the same identity because their background looked very similar. This highlighted to us that our model was relying on the wrong cue (the background) when comparing cropped images of people.

To alleviate this issue, we introduced an augmentation technique that we call 'background augmentation'. We segment the person out from their crop and paste this segmented image onto a different background. By doing so, we are implicitly telling the model to ignore the background of the person and focus on key features on the person instead. After introducing this technique, we found that the number of instances of the model getting confused due to background similarity dropped drastically.

While we've covered quite a few techniques in this post, there are plenty more out there. For those of you looking to try out techniques for your models, even using simple data augmentation techniques like flipping images or changing the brightness can lead to huge performance improvements. Beyond the simple techniques, this is still an active field with researchers regularly coming up with better techniques.

Rest assured, as we continue to grow our product offering, we will stay on top of the latest and best data augmentation techniques to incorporate them into our pipelines while also developing our own in-house techniques in the pursuit of better outcomes for our customers.

If you want to learn even more about machine learning, check out our free ebook, "Machine Learning Explained":

No comments